Machine learning is rapidly changing the way materials science is done. In projects like NICKEFFECT, ML models help researchers uncover structure–property relationships from limited data and support experimental design. Even with small datasets, ML can transform scattered measurements into actionable scientific insight.

But an accurate model on its own is not enough. To make machine learning a dependable part of research workflows, teams need robust systems to manage how models are created, evaluated, deployed, and updated. This is where MLOps becomes essential.

What MLOps Actually Is

MLOps (Machine Learning Operations) is the discipline of managing the entire lifecycle of a machine‑learning model. It combines practices from data science and DevOps to ensure models are:

- Reproducible. Every dataset, parameter, and code version is recorded.

- Traceable. Experiments can be compared and understood months later.

- Deployable. Models can be used reliably outside a notebook.

- Maintainable. Models evolve as new data is collected.

- Operational. Models become tools that researchers can actually use.

In short, MLOps turns machine learning from a one‑off experiment into a repeatable, scalable, and trustworthy process.

Why MLOps Matters in Scientific Research

Machine learning adds capabilities but also complexity.

Details such as preprocessing steps, feature choices, random seeds, or hyperparameters tuning can make results difficult to reproduce without structured tracking. As experiments progress and data evolves, models need to be monitored and retrained to remain accurate. Collaboration across multiple partners becomes challenging when everyone uses different workflows. And even well‑performing models can be difficult to integrate into real tools and automated systems.

MLOps addresses all these issues by enforcing consistent workflows, automated tracking, and reliable deployment patterns.

What an MLOps Workflow Looks Like

A typical MLOps‑enabled process includes:

- Versioned data preparation and curation

- Reproducible feature engineering

- Systematic experiment tracking for metrics and parameters

- Model versioning and searchable histories

- Automated deployment pipelines

- Monitoring and retraining based on new data or drift

This structure reduces friction and allows researchers to focus on interpreting scientific results rather than managing technical logistics.

How all this is used in NICKEFFECT

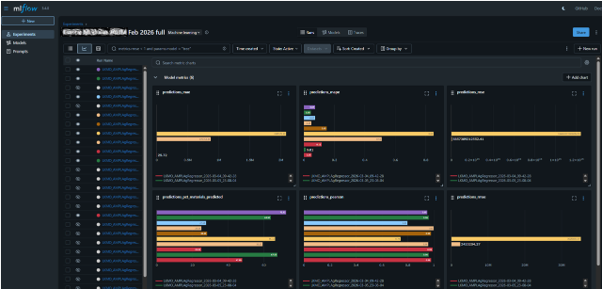

In NICKEFFECT, MLOps principles were embedded directly into the project’s digital decision‑support system. Using tools like MLflow, every model follows a consistent workflow from preprocessing and feature extraction to training and evaluation.

All experiments are fully tracked, including dataset versions, hyperparameters, metrics, and generated model artifacts. This shared experiment history ensures transparency and reproducibility across all partners. When a model shows promise, it is promoted to a managed model registry, where each version is clearly defined and linked to its originating experiment.

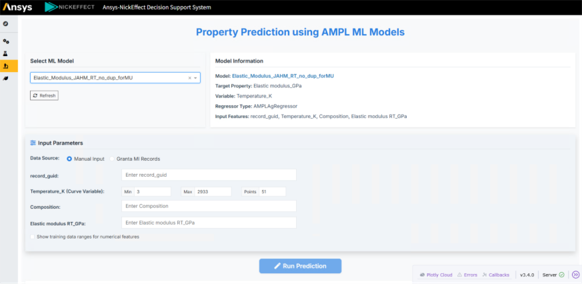

Once validated, models are packaged and exposed through REST APIs, enabling integration with automated workflows and user‑friendly applications. This allows researchers to explore predictions, and uncertainty estimates without writing code or dealing with model internals.

Bringing It All Together

In NICKEFFECT, we demonstrate how modern MLOps practices can and should be seamlessly integrated into the research process by integrating MLflow experiment tracking and model‑driven APIs into the decision‑support system. We show how machine learning can deliver traceable, reproducible insight throughout the project. This demonstration can offer useful direction for future developments and support the growing use of structured, transparent workflows in materials science.